Sample libraries and synthesizers have been for decades the only available means to make MIDI-based music. Their virtues and drawbacks are well known. Simply put, sample-based libraries, while preserving the basic timbre of the real instrument result in a static sound which cannot properly morph across dynamics, vibrato, portamento etc. This is particularly true for solo instruments. Synthesizers allow for greater expressiveness, but at the expense of the realism of sound.

Despite recent improvements, the situation has not substantially changed over the last two decades, and the difference between real and virtual instruments is still easily perceived at the very first listening.

The purpose of our research and development was to overcome these limitations, creating easy-to-play expressive virtual instruments yielding musical phrases which could not be distinguished from the real ones. This has been achieved by our sample modeling approach:

The result is a set of user-friendly virtual instruments with few midi controllers, which can be played in real time or from a sequencer, in standalone mode or as a plugin, for PC or Mac. The played phrases are indistinguishable from the real thing.

Samplemodeling distinguishes itself in the realm of virtual instruments by its dedication to capturing, analyzing, and faithfully reproducing the intricacies of real-world musical performances.

The video presented here serves as a compelling illustration of our cutting-edge technology, which has emerged from years of rigorous and extensive research in the analysis of the musical performance of real-world instrumentalists.

The video shows a trumpet player executing several phrases in an anechoic chamber. But the audio you hear is actually a MIDI rendering of a Samplemodeling Virtual Trumpet.

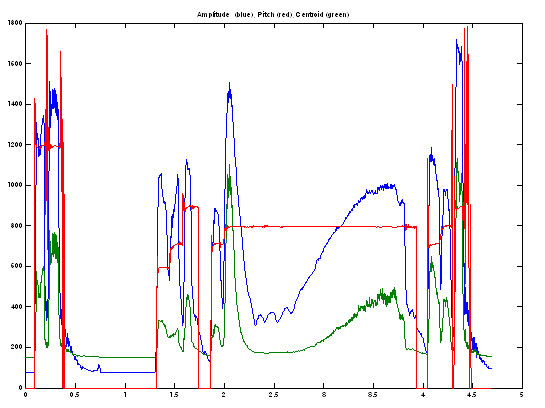

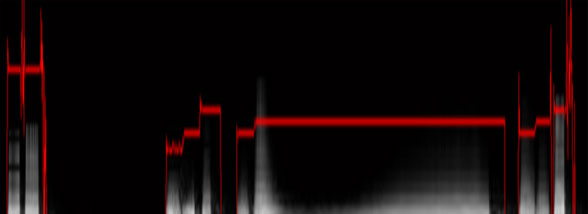

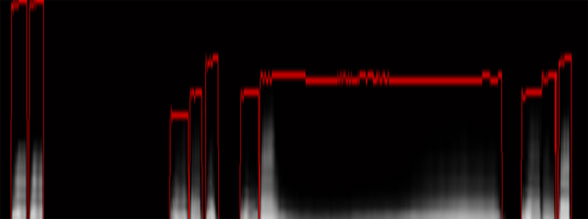

Years of thorough study have been invested in ensuring that every facet of sound is accurately reproduced. In the upcoming series of slides, we invite you to examine a side-by-side comparison. On the left, you will find a series of graphs derived from the analysis of a phrase of a real funky trumpet in the style of Earth, Wind & Fire, showcasing the instrument’s spectral and audiometric characteristics. On the right, the spotlight will shift to the same phrase performed by a Samplemodeling Virtual Trumpet, showing a nearly perfect identity.

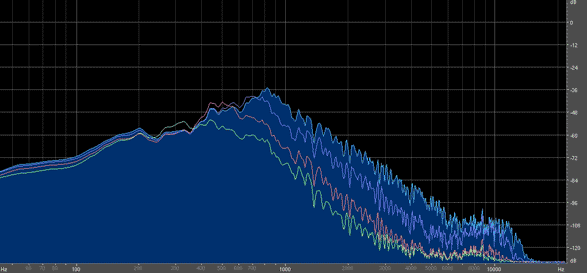

The intrinsic relationship between the dynamics of a musical performance and the resulting timbre is a fundamental aspect of sound generation in both acoustic and virtual instruments. This connection plays a pivotal role in shaping the character and expressiveness of the music we hear. To delve deeper into this intricate dynamic-timbre interplay, we conducted a spectral analysis encompassing a chromatic range of notes.

We performed a spectral analysis on a chromatic series of notes spanning the two lower octaves (A#-1 – A#1), played with the Bass Trombone at various dynamics (CC11 = 20, 40, 60, 80,100, 120). The graph below only shows the spectra corresponding to the four lower dynamics, for the sake of clarity.

© 2007/2023 Samplemodeling TM

Adding {{itemName}} to cart

Added {{itemName}} to cart